背景

客户N在使用H部门提供的MySQL遇到部分性能问题后,未得到H部门的及时支撑。机缘巧合,我们的服务化MySQL刚刚发布第一版,客户N有意切换我们的MySQL。由于部门策略调整,我们准备由原来的社区MySQL切换为部门R的商业版MySQL,其间对接问题不提,客户提出的首要问题是前期尝试通过mysqldump备份数据,发现有报错并且很慢,我们的策略是 为拓展业务先把锅接下来吧 答应先提供数据迁移方案供客户评估。

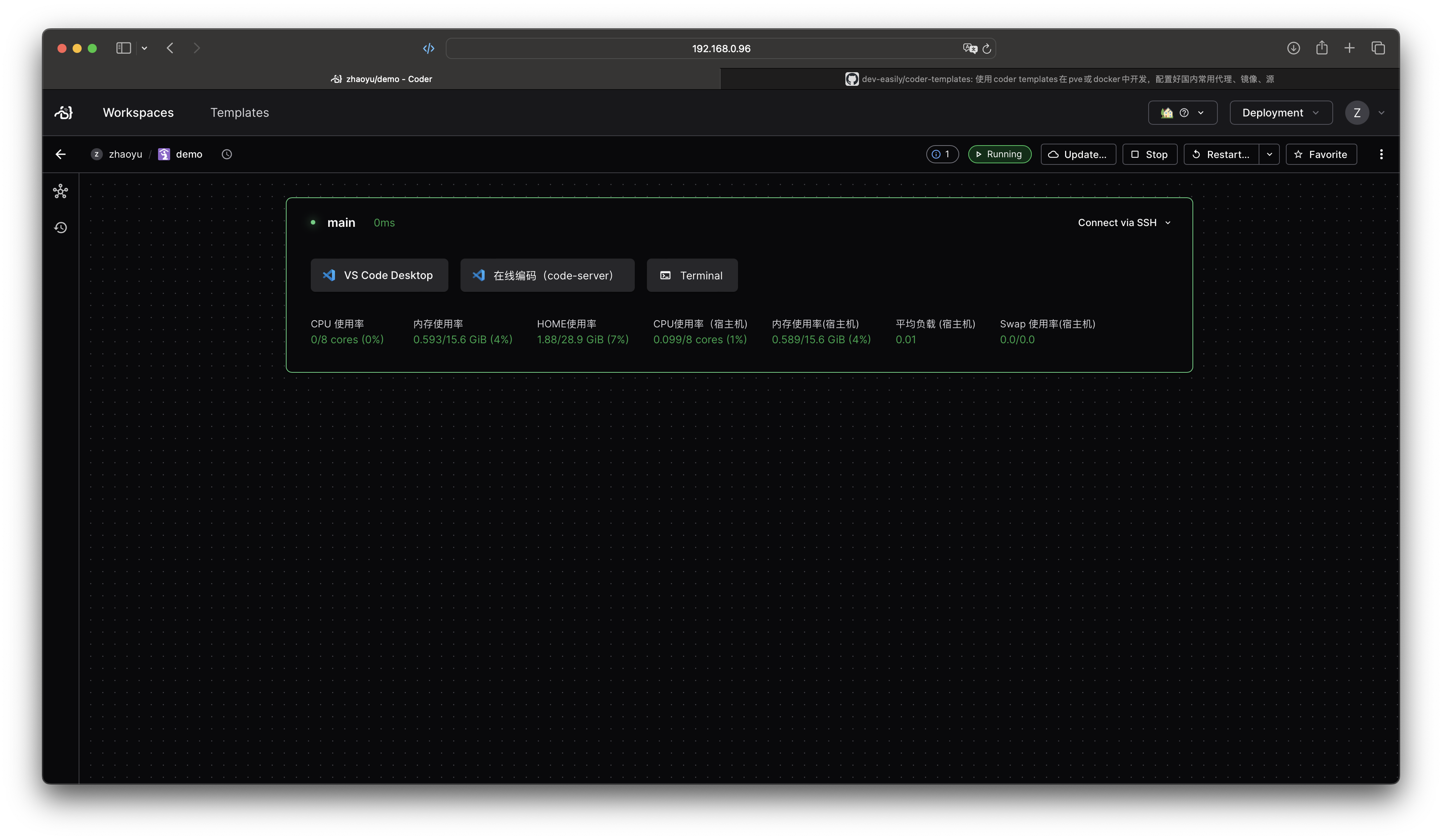

机器、数据、应用情况

源机器cpu核心数16,内存32G;

两台机器,一个是master,一个是slave;未配置互为主备;

开启了基于GTID的主从复制;

从镜像库来看,数据量3800W左右,实际生产环境每天还会增加约不到100w;

0-1w

1w-10w

10w-50w

50w-100w

100w-1000w

>1000w

表数量约

2105

83

28

5

6

1

MySQL为社区版5.7.23,所有表均为INNODB引擎;

据客户N的业务人员反馈,他们尝试使用mysqldump可能会报错。

一些准备工作

为了能够顺滑的开展后期工作,我习惯先整理一些常用的命令,以备随时复制粘贴…

-- 查询所有业务数据库的表名,数据库,存储引擎信息

select table_name,table_schema,engine from information_schema.tables where engine='innodb' and table_schema not in('mysql','information_schema','performance_schema','sys');

-- 查询所有业务数据库的表的数量

select count(*) from information_schema.tables where engine='innodb' and table_schema not in('mysql','information_schema','performance_schema','sys');

-- 查询所有表的数据量

SELECT CONCAT(TABLE_SCHEMA,'.',TABLE_NAME) AS table_name, IFNULL(TABLE_ROWS,0) as table_rows FROM information_schema.tables WHERE TABLE_SCHEMA NOT IN ('mysql','information_schema','performance_schema','sys') ORDER BY 2;

-- 查询所有业务数据库的视图数量

select table_name,table_schema from information_schema.views where table_schema not in ('mysql','information_schema','performance_schema','sys');

select count(*) from information_schema.views where table_schema not in ('mysql','information_schema','performance_schema','sys');

-- 查询所有routines(存储过程和函数)的数量

select * from mysql.proc where db not in ('mysql','information_schema','performance_schema','sys')\G

-- 查询所有触发器的数量

SELECT * FROM information_schema.triggers where TRIGGER_SCHEMA not in ('mysql','information_schema','performance_schema','sys')\G

-- 查询所有事件的数量

SELECT * FROM information_schema.EVENTS where EVENT_SCHEMA not in ('mysql','information_schema','performance_schema','sys')\G

-- 查询所有用户数量

select user,host from mysql.user;

-- 查看磁盘IO信息

iostat -x -p /dev/mapper/vg02-lv02 1 10 -m

iostat -x -p 1 10 -m

首先尝试使用原生mysqldump

业务诚不欺我,果然有坑,报错如下(安全需要,隐藏关键信息)。

mysqldump: Couldn’t execute SHOW FIELDS FROM XX: View XX.XX

references invalid table(s) or column(s) or function(s) or

definer/invoker of view lack rights to use them (1356)

报错信息很明显了,本次实践中,主要是视图引用创建语句中子查询的列不存在,select 都会报错,这个我们只能让业务自己去审视,决策是否删除或者修复。

由于通过mysqldump来发现那些视图有问题非常不效率,所有写了一个简单的脚本:

搜集所有有问题的视图.

#!/usr/bin/env bash

function usage {

echo "Usage: $0 [-u USER_NAME] [-p PASSWORD] [-d WORKDIR] [-D:DROP ERROR VIEWS]"

echo "Do not support -uroot, using -u root please."

# too 2

exit 2

}

function set_variable {

local varname=$1

shift

if [[ -z "${!varname}" ]]; then

eval "$varname=\"$@\""

else

echo "Error: $varname already set"

usage

fi

}

function execMysqlCommand {

mysql -u${USER_NAME} -p${PASSWORD} --skip-column-names -e "$1"

}

function checkView {

viewName="$1"

# too slow

# mysql -u${USER_NAME} -p${PASSWORD} -e "select 1 from ${viewName} limit 1" >/dev/null 2>>/home/mysql/temp/view_error

# not good either

# mysql -u${USER_NAME} -p${PASSWORD} -e "update ${viewName} set thisIsANotExistCol=123;" >/dev/null 2>>/home/mysql/temp/view_error

#

execMysqlCommand "show fields from ${viewName};" >/dev/null 2>>/home/mysql/temp/view_error

return $?

}

function checkAllViewsAndGetErrorViews {

echo "">/home/mysql/temp/view_error

i=1

for view in ${views[@]};do

echo -n "checking $view ...$i/${#views[@]}" "..."

checkView ${view}

result=$?

[[ ${result} -ne 0 ]] && echo "bad"

[[ ${result} -ne 0 ]] && echo "pass"

((i++))

done;

cat /home/mysql/temp/view_error|grep "1356"|awk -F"'" '{print $2}'>/home/mysql/temp/error_list

rm /home/mysql/temp/view_error -rf

error_views=(`cat /home/mysql/temp/error_list`)

}

function printIgnoreMsg {

[[ ${#error_views[@]} -gt 0 ]] && echo "You can add these statements to mysqldump to ignore those error views:"

for view in ${error_views[@]};do

echo -n " --ignore-table=${view}"

done

echo ""

}

function backupErrorViewsSql {

echo "Backing up create statement of error views to ${WORKDIR}..."

echo "" > /home/mysql/temp/backup_create_view_sql -rf

for view in ${error_views[@]};do

execMysqlCommand "show create view $view;" >>/home/mysql/temp/backup_create_view_sql 2>/dev/null

done

cat /home/mysql/temp/backup_create_view_sql|awk -F'\t' '{print $2";"}'|grep -v 'Create View;'>>/home/mysql/temp/backup_create_view

rm -rf /home/mysql/temp/backup_create_view_sql

echo "Done backing up create statement of error views."

}

function deleteErrorViews {

echo "Dropping error views..."

for view in ${error_views[@]};do

while [[ "X" == "X${confirm}" ]];do

read -p "please confirm to delete ${view}:(y/n)" confirm

done

if [[ "Xy" == "X${confirm}" ]];then

execMysqlCommand "drop view $view;" 2>/dev/null

fi

done

echo "Done dropping error views."

}

init() {

unset DELETE_VIEWS USER_NAME PASSWORD WORKDIR

while getopts 'u:p:d:D?h' option

do

case ${option} in

d) set_variable WORKDIR $OPTARG ;;

D) set_variable DELETE_VIEWS true ;;

u) set_variable USER_NAME $OPTARG ;;

p) set_variable PASSWORD $OPTARG ;;

h|?) usage ;; esac

done

[[ -z "${USER_NAME}" ]] && usage

[[ -z "${PASSWORD}" ]] && usage

[[ -z "${WORKDIR}" ]] && set_variable WORKDIR "/home/mysql/temp" && mkdir -p ${WORKDIR}

echo "Using directory ${WORKDIR} as temp dir."

}

getAllViews() {

echo "Getting all views from schema..."

views=(`execMysqlCommand "select concat(table_schema,'.',table_name) from information_schema.views where table_schema not in ('mysql','information_schema','performance_schema','sys');" 2>/dev/null`)

}

init $@

getAllViews

checkAllViewsAndGetErrorViews

printIgnoreMsg

[[ X"true" == X"${DELETE_VIEWS}" && ${#error_views[@]} -gt 0 ]] && backupErrorViewsSql && deleteErrorViews

命令优化x

具体方案之前,先加上一些基本的备份对象

--hex-blob --single-transaction --quick --routines --triggers

方案一 160分钟

单线程直接执行mysqldump,大概160分钟

> /data01/chroot/usr/local/mysql5.7.23/bin/mysqldump -udbXXXX -pXXXX --all-databases --hex-blob --ignore-table=netcxx.xxxxx --ignore-table=netxxx.rxxx(此处很多忽略的视图) | gzip > /temp/back0129.sql.gz

方案二 90分钟

考虑一个表一个文件,10个线程,大概90分钟;TODO 测试增加线程

multidump.sh[lines=25..55].

multidump() {

rm -rf ${WORKDIR}/backup

mkdir -p ${WORKDIR}/backup

COMMIT_COUNT=0

COMMIT_LIMIT=10

error_views_file="${WORKDIR}/error_list"

DBTBS=(`cat ${WORKDIR}/listOfTables`)

i=1

for DBTB in ${DBTBS[@]};do

echo "processing $i/${#DBTBS[@]}"

((i++))

DB=`echo ${DBTB} | sed 's/\./ /g' | awk '{print $1}'`

TB=`echo ${DBTB} | sed 's/\./ /g' | awk '{print $2}'`

if [[ "X"`grep -w ${DBTB} ${error_views_file}` != X"" ]];then

echo skip "${DBTB}"

continue

fi

dumpIt ${DB} ${TB}

(( COMMIT_COUNT++ ))

if [[ ${COMMIT_COUNT} -eq ${COMMIT_LIMIT} ]]

then

COMMIT_COUNT=0

wait

fi

done

if [[ ${COMMIT_COUNT} -gt 0 ]]

then

wait

fi

}

方案三 15-22分钟

mysqlpump 是 mysql 提供的工具,文档和网上教程一大堆,这里只谈使用。 可以很直观的看到执行到哪个表,剩余多少行;注意:mysqlpump遇到错误会停止继续,比如命令不正确、数据结构有问题。而且这个数据库开启GTID,所以如果你的数据库没有此选项,要把命令中的—set-gtid-purged=ON去掉。

两种压缩格式的时间差距还是很明显:

mysqlpump -uusername -ppassword –compress-output=ZLIB –default-parallelism=100 –set-gtid-purged=ON –hex-blob –add-drop-database –add-drop-table –add-drop-user –users |gzip > /temp/test.sql.gz

Dump progress: 0/xx tables, xx/xxxxxxxxx rows Dump completed in xxxxxx milliseconds

mysqlpump -uusername -ppassword –compress-output=LZ4 –default-parallelism=100 –set-gtid-purged=ON –hex-blob –add-drop-database –add-drop-table –add-drop-user –users > /temp/testlz4.lz4

方案四

mysqlpump 可以针对database进行多线程导出,但是有时候数据分布不均匀,90%的数据可能都在一个表内,这种情况下mysqlpump显得无能为力。有没有可以对单个大表继续进行分拆的工具呢? mydumper 可以做这件事。

首先统计表的分布

totalSql="SELECT IFNULL(SUM(TABLE_ROWS),0) as t_rows_sum FROM information_schema.tables WHERE TABLE_SCHEMA NOT IN ('mysql','information_schema','performance_schema','sys');"

eachTableSql="SELECT CONCAT(TABLE_SCHEMA,'.',TABLE_NAME) AS table_name, IFNULL(TABLE_ROWS,0) as table_rows FROM information_schema.tables WHERE TABLE_SCHEMA NOT IN ('mysql','information_schema','performance_schema','sys') ORDER BY 2;"

验证mydumper导出database的效率

- 只导出netcare,17分钟-20分钟左右

mydumper -u username -p password -v 3 -B databaseName –triggers –events –routines –rows=500000 –compress-protocol -c -t threadNum.ie.100 –trx-consistency-only –outputdir /temp/mydumper

验证mydumper导出某一个大表的效率

https://dev.mysql.com/doc/mysql-enterprise-backup/8.0/en/

方案五 优化前:21分钟

unset tempdir

tempdir=/data03/backup_`date '+%y%m%d%H%M%S'`

mkdir ${tempdir}

~/mysqlbackup -udbAdmin -pabcd1234 --backup-dir=${tempdir} --compress backup

echo "Successfully backing up data to ${tempdir}"

# 有待优化 https://dev.mysql.com/doc/mysql-enterprise-backup/4.1/en/backup-capacity-options.html

--limit-memory=MB (default 100)

--read-threads=num_threads (default 1)

--process-threads=num_threads (default 6)

--write-threads=num_threads (default 1)

~/mysqlbackup -udbAdmin -pabcd1234 --backup-dir=${tempdir} --compress backup

#优化后: 15分钟

# 整库备份到单个文件

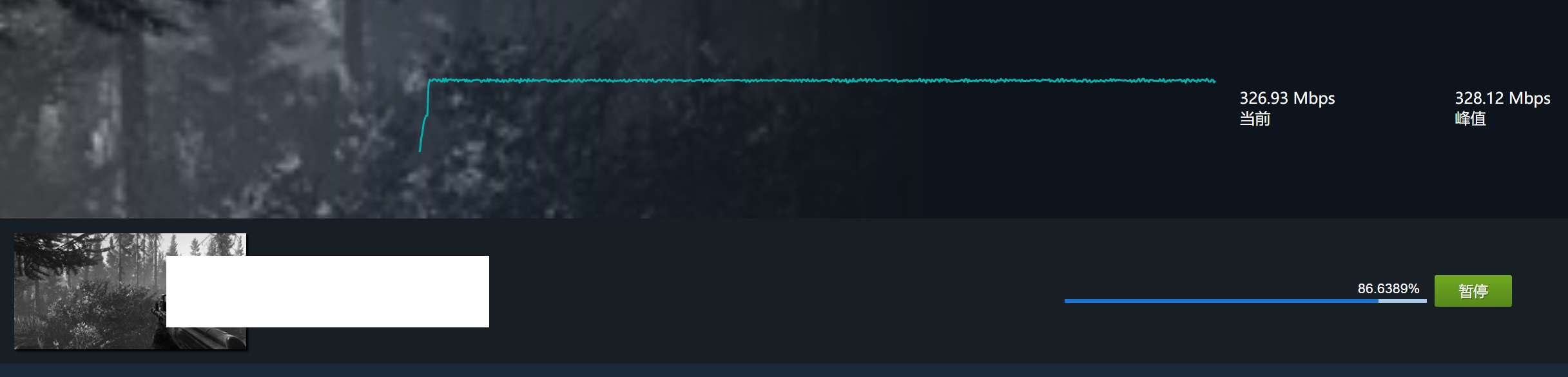

~/mysqlbackup -udbAdmin -pabcd1234 --compress --compress-level=5 --limit-memory=1024 --read-threads=10 --process-threads=15 --write-threads=10 --backup-dir=${tempdir} --backup-image=/data03/`basename ${tempdir}`.bin backup-to-image

#直接备份到目标机器:

~/mysqlbackup -udbAdmin -pabcd1234 --compress --compress-level=5 --limit-memory=1024 --read-threads=10 --process-threads=15 --write-threads=10 --backup-dir=${tempdir} --backup-image=- backup-to-image | ssh root@10.15.32.73 'cat > /opt/temp_for_restore/my_backup.bin'

具体备份恢复使用见另一篇文章 mysql gtid 主从复制数据迁移(物理备份)